The Rollout Plan That Has All Five Components but Isn’t Execution-Grade

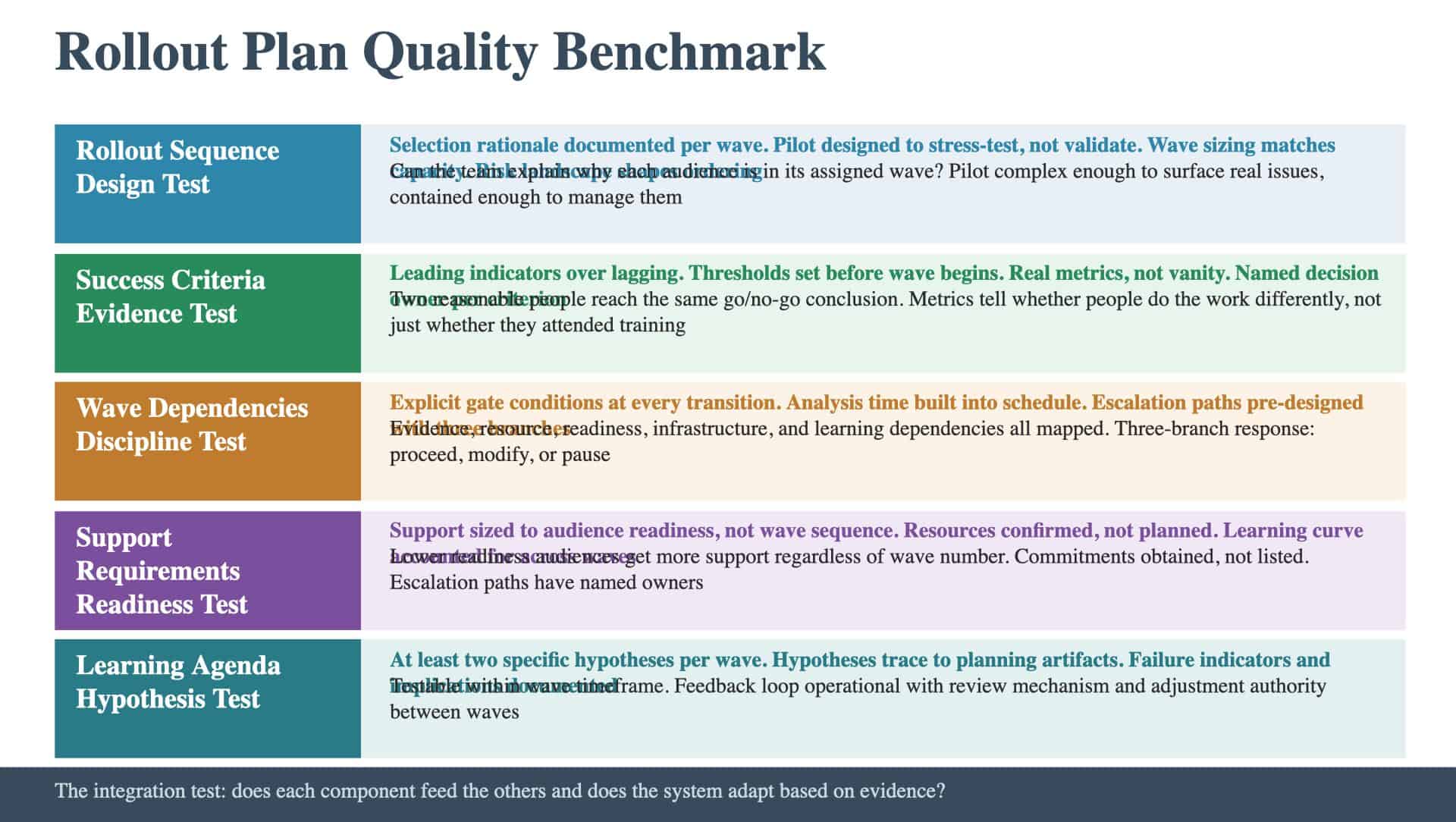

A program at this score level has the structural elements in place. There is a rollout sequence with defined waves. There are success criteria. There is a dependency map. There are support requirements. There is a learning agenda. The artifact covers the right territory. The question is whether each component meets the quality bar that separates a planning document from a tool the team can execute against. A Rollout Plan can have all five components and still fail if the sequence is defaulted rather than designed, the criteria are vanity metrics, the dependencies lack escalation paths, the support doesn’t match readiness, or the learning agenda is too vague to test. Here is the quality benchmark across all five components.

Rollout Sequence: The Design Test

The quality bar for the rollout sequence is intentional design. The team can explain why each audience is in its assigned wave and what would change if the sequence were different. Execution-grade means: Selection rationale is documented for every wave. Which audiences are in which wave, and why. The rationale should reference specific criteria: learning value, risk management, momentum building, or resource availability. If the only rationale is “they volunteered” or “their leader is supportive,” the sequence is defaulted rather than designed. The pilot is designed to stress-test, not validate. The pilot environment should be complex enough to identify real issues while contained enough that failures don’t cascade. If the pilot uses the friendliest audience in the easiest conditions, it will produce a positive result that reveals nothing about how the change performs under pressure. Wave sizing matches organizational capacity. Each wave is right-sized to the training, support, and leadership attention available. If waves are too large, support gets diluted. If waves are too small, the rollout extends beyond the organization’s patience. The sizing should reflect resource reality, not just the desired timeline. The sequence reflects the risk landscape. The risk assessment done earlier in the method should inform wave placement. High-risk areas might warrant earlier inclusion (to identify issues in a controlled environment) or later placement (to let the team build capability first). The sequence should show evidence that the risk landscape shaped the ordering.

Success Criteria: The Evidence Test

The quality bar for success criteria is that they produce evidence, not anecdotes. Execution-grade means: Metrics are leading indicators, not lagging. Adoption rates within the first two weeks, error rates during transition, escalation frequency, time-to-completion: these tell the team early whether things are working. Revenue impact and full adoption rates take too long to materialize for go/no-go decisions between waves. Thresholds are set before the wave begins. Pre-set thresholds prevent retroactive rationalization. If the team sets thresholds after seeing data, they’ll set them to match the data. The discipline of pre-commitment creates genuine evaluation. The criteria distinguish real metrics from vanity metrics. “Training completed” measures attendance. “Users completing the new process without escalation” measures adoption. The test: does this metric tell the team whether people are doing the work differently? Each criterion has a decision owner. A named individual with the authority to delay the next wave. If the decision owner is the same person under pressure to maintain the timeline, the criteria will be rationalized rather than enforced. Two reasonable people would reach the same conclusion. The criteria are specific enough that evaluation is objective. Not “high adoption” but “75 percent of target users completed at least three transactions in the new system during the first two weeks.”

Wave Dependencies: The Discipline Test

The quality bar for wave dependencies is gating discipline with escalation design. Execution-grade means: Every transition has explicit gate conditions. The team can state what must be true before each wave begins. These include evidence conditions (criteria met), resource conditions (support available), readiness conditions (audience prepared), and learning conditions (hypotheses evaluated). Analysis time is built into the schedule. The gap between waves includes explicit time for data review, learning agenda evaluation, and plan adjustment. If Wave 2 starts before Wave 1 data is analyzed, the phased approach is cosmetic. Escalation paths are pre-designed. For each transition, the team has documented what happens if dependencies aren’t met. The three branches are clear: proceed as planned, proceed with modifications (specified), or pause. The decision owner is named. The criteria for choosing between branches are defined. Dependencies extend beyond evidence. The map includes resource dependencies (are trainers available?), readiness dependencies (has the audience been prepared?), infrastructure dependencies (are systems configured?), and learning dependencies (have hypotheses been evaluated?). Programs that only track evidence dependencies miss the operational prerequisites.

Support Requirements: The Readiness-Matched Test

The quality bar for support requirements is alignment between support levels and audience readiness. Execution-grade means: Support is sized to audience readiness, not wave sequence. Later waves don’t automatically get less support. The readiness assessment from the Change Plan determines support allocation. Audiences with lower readiness scores get higher support levels regardless of which wave they’re in. Resources are confirmed, not just planned. For each wave, the team has commitments from the people who will provide training, staffing, and escalation support. A plan that lists required resources without confirming their availability is a wish list. The support model accounts for the learning curve. Early waves need more support per user because the support team itself is learning. Later waves benefit from that experience but may face different challenges. The plan reflects this trajectory. Escalation paths have named owners. For issues that front-line support can’t resolve, the escalation path specifies who handles them, what the response time expectations are, and how issues are tracked to resolution.

Learning Agenda: The Hypothesis Test

The quality bar for the learning agenda is testable specificity. Execution-grade means: Each wave has at least two specific hypotheses. Not “learn how the rollout works” but “validate that users can complete the new workflow in under five minutes without escalation.” Each hypothesis is testable within the wave’s timeframe. Hypotheses trace to planning artifacts. High-priority risks from the risk register become hypotheses. Readiness gaps from the change plan become hypotheses. Critical roadmap assumptions become hypotheses. The learning agenda is the operational expression of the program’s risk and readiness analysis. Failure indicators and implications are documented. For each hypothesis, the team has defined what evidence would disprove it and what would change as a result. If the hypothesis is wrong, the implication specifies a concrete adjustment to the plan for subsequent waves. The feedback loop is operational. There is a review mechanism between waves that evaluates each hypothesis, proposes adjustments, and has the authority to change the plan. Without the feedback loop, the learning agenda produces observations but not improvements.

The Integration Test

The execution-grade test for the entire Rollout Plan is whether the five components function as a system. The sequence feeds the criteria (each wave has its own success metrics). The criteria feed the dependencies (unmet criteria block progression). The dependencies feed the support requirements (what resources are needed at each gate). The learning agenda feeds back into all four components (what the team learned changes the plan). The question is whether the team builds a Rollout Plan where each component feeds every other and the system adapts based on evidence: or whether it produces five parallel sections that check the structural boxes while the deployment runs on calendar and hope.

Keep Reading

Explore the foundations and common gaps: