The Change Plan That Looks Complete but Isn’t Execution-Grade

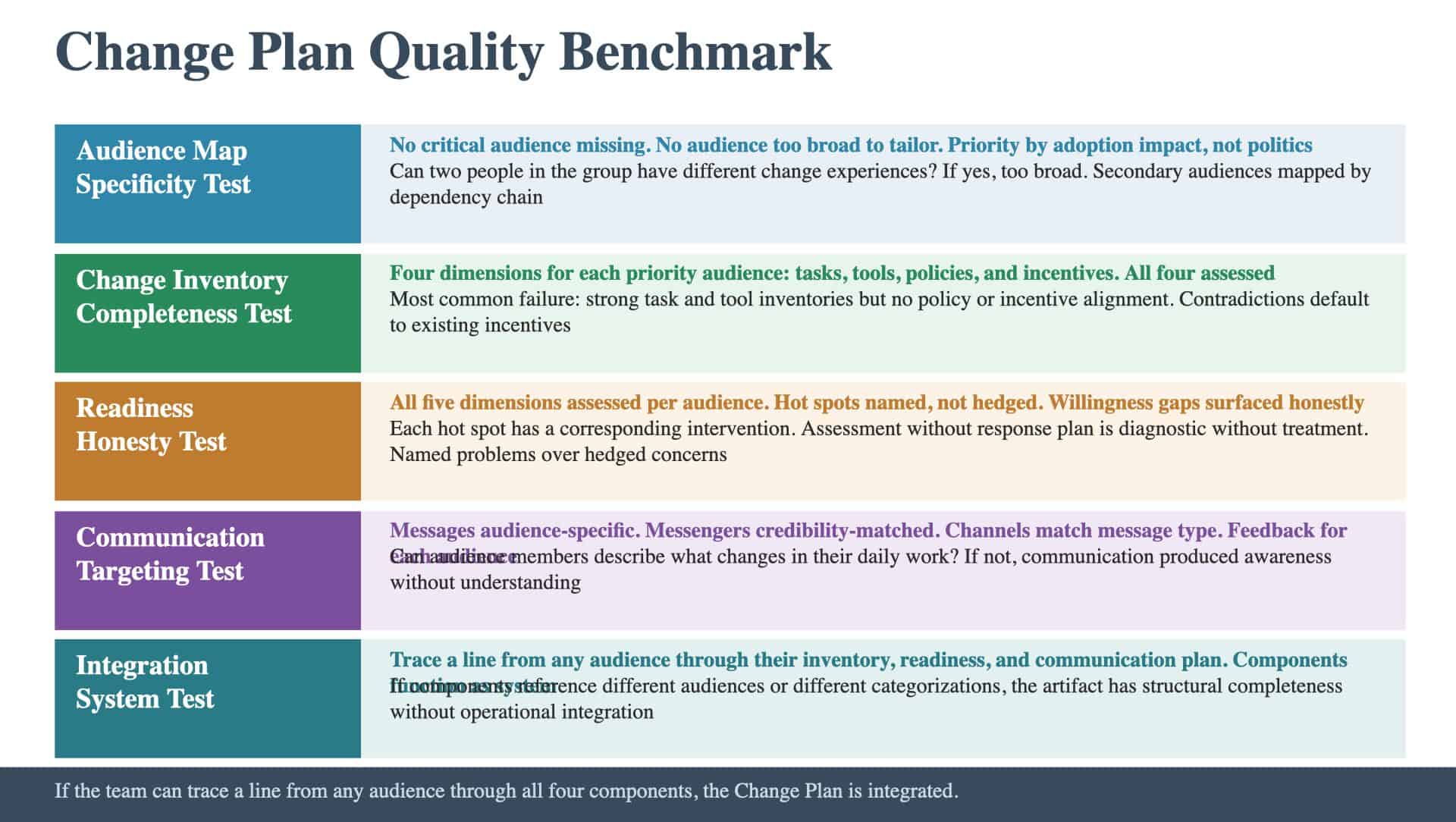

A program at this score level has all four components of a Change Plan in place. The audience map, change inventory, readiness assessment, and communication plan all exist; the artifact covers the right territory. The question is whether it meets the quality bar that separates a planning document from an execution tool. A Change Plan that checks every structural box can still fail if the audience map is too broad, the change inventory misses a dimension, the readiness assessment avoids the hard truths, or the communication plan lacks feedback mechanisms. Here is the quality benchmark across all four components, stated as what the team should be able to demonstrate when the artifact is execution-grade.

Audience Map: The Specificity Test

The quality bar for the audience map is a single question: can the team identify every group whose non-adoption would threaten the program? Execution-grade means: No critical audience is missing. The map covers primary audiences (groups whose behavior is directly targeted) and secondary audiences (groups whose work changes because of upstream or downstream dependencies). Programs that score well here have walked the dependency chain for every major change and identified the cascade effects. No audience is described so broadly that the change approach can’t be tailored. “Operations” is not an audience. “Store managers who will need to learn the new inventory system” is an audience. The test: if two people in the described group would have meaningfully different change experiences, the audience definition is too broad. Priority is based on impact, not politics. Audiences are ranked by how critical their adoption is to program success, not by organizational seniority. A frontline team whose non-adoption would stop the program outranks a leadership group whose buy-in is helpful but not determinative. The map connects to the stakeholder map without duplicating it. The team can articulate which stakeholders are also audiences (they appear on both maps) and which are not (they influence but don’t change). The stakeholder mapping feeds the audience map but doesn’t replace it.

Change Inventory: The Completeness Test

The quality bar for the change inventory is dimensional completeness: for each priority audience, the team can describe what’s changing across all four dimensions. Tasks. What will they do differently? New processes, workflows, responsibilities. The test: can the team describe a day in the life of this audience after the change, and is it meaningfully different from today? Tools. What will they use to do it? New systems, platforms, interfaces. The test: has the team identified every tool change, including the tools people will stop using? Policies. What rules will govern their work? New standards, approval processes, compliance requirements. The test: do the new policies align with the new tasks, or do they create contradictions? Incentives. What motivates compliance? New metrics, performance criteria, reward structures. The test: does the incentive structure reward the new behavior, or does it still reward the old behavior? The most common failure at this level is dimensional incompleteness. The team has strong task and tool inventories but hasn’t addressed policy and incentive alignment. The change inventory describes what people will do differently and what they’ll use, but doesn’t address whether the rules and rewards support the new behavior. This creates contradictions that people resolve by defaulting to whatever the existing incentive structure rewards.

Readiness Assessment: The Honesty Test

The quality bar for the readiness assessment is honesty across all five dimensions: awareness, understanding, capability, willingness, and capacity. Execution-grade means: All five dimensions are assessed for each priority audience. If the assessment covers awareness and leadership alignment but skips capability, willingness, or capacity, it measures the least predictive factors. A person who is aware and has leadership support but lacks capability, willingness, or capacity will not adopt the change. Hot spots are named, not hedged. The assessment identifies specific audience-change combinations where adoption is at risk and states the nature of the risk. “The procurement team has zero training hours on the new process and is simultaneously managing a vendor consolidation” is a named hot spot. “Some audiences may require additional support” is a hedge that allows inaction. Willingness gaps are surfaced, not avoided. Some audiences have rational reasons to resist: the change reduces their autonomy or shifts power away from their function. An execution-grade assessment names these dynamics rather than reporting uniform enthusiasm. Change saturation is evaluated. The assessment maps the broader change landscape for each priority audience. If an audience is absorbing multiple significant changes simultaneously, the assessment flags this as a capacity constraint regardless of their scores on other dimensions. The assessment has a response plan. Each hot spot has a corresponding intervention: training for capability gaps, different messengers for willingness gaps, sequencing adjustments for capacity gaps. A readiness assessment without a response plan is a diagnostic without a treatment.

Communication Plan: The Targeting Test

The quality bar for the communication plan is audience specificity with feedback. Execution-grade means: Messages are audience-specific, not generic. Each priority audience receives communication tailored to their specific changes from the change inventory. The test: if an audience member read their communication, could they describe what changes in their daily work, when, and what they need to do to prepare? Messengers are credibility-matched. For each audience and message type, the plan identifies who delivers the message based on who has credibility with that group on that topic. The test: is the messenger someone this audience trusts on this subject, or is the messenger chosen for organizational convenience? Channels match the message type. Information that requires dialogue (what’s changing in someone’s daily work) travels through channels that allow questions. Information that’s broadcast-appropriate (program milestones, timeline updates) can use broadcast channels. The test: are important messages about specific changes being buried in broadcast channels? Feedback mechanisms exist for each audience. The plan specifies how the team will know whether communication produced understanding, not just awareness. The test: after each communication cycle, can the team report what each audience understands about the change, not just that messages were sent? Timing is sequenced to the program timeline and the audience’s needs. Communication arrives before the change, not concurrent with it. Audiences that change first are communicated to first. The test: will any audience encounter the change before they’ve received preparation?

The Integration Test

Beyond the component-level benchmarks, the execution-grade test for the entire Change Plan is integration. The four components should function as a system, not as independent documents. The audience map drives the change inventory (each audience has a corresponding inventory entry). The change inventory drives the readiness assessment (each change is assessed for each audience). Both drive the communication plan (messages are tailored to audiences based on their changes and readiness). If the team can trace a line from any audience on the map through their change inventory, their readiness assessment, and their communication plan, the artifact is integrated. If the components exist as parallel documents that reference different audiences or use different categorizations, the artifact has structural completeness without operational integration. Two sub-artifacts anchor this integration in practice. The Impact Assessment provides the quantitative backbone that connects audience-level changes to program-level consequences. Teams that build a Change Plan where every component feeds every other create a system that self-corrects; teams that produce four parallel documents check the structural boxes while the adoption gaps between them go unaddressed.

Go Deeper: The Change Plan

This article covers one dimension of the Change Plan, the seventh of nine artifacts in the Planning & Roadmapping method. The Change Plan answers the board question: “How will people adopt this?” Explore the full Change Plan → Want us to build this with you? Book a consultation →

Keep Reading

Explore the foundations and common gaps:

- What a Change Plan Is and Why Good Programs Fail at Adoption

- You Have a Communications Plan but Not an Audience Map