The Rollout That Repeated Its Mistakes at Scale

The program completed four waves on schedule. Each wave deployed the same process to a new set of audiences. Each wave encountered similar problems: integration issues, training gaps, process ambiguities that forced users to create workarounds. The post-mortem discovered that Wave 1 problems replicated in Waves 2, 3, and 4 because nobody captured the lessons from earlier waves and fed them into the plan for later ones. The same training gaps appeared in every wave because the training wasn’t updated. The same integration issues surfaced because nobody cataloged them after Wave 1. The program didn’t lack waves. It lacked a learning agenda. Deployment happened on schedule. Learning didn’t happen at all.

What a Learning Agenda Is and Why It Matters

A learning agenda is a structured list of hypotheses that each wave is designed to test. It turns the rollout from a deployment exercise into a discovery process. The difference is operational. A deployment calendar asks: “When do we launch each wave?” A learning agenda asks: “What do we want each wave to teach us?” The calendar treats waves as execution milestones. The learning agenda treats waves as experiments that produce evidence. This matters because rollout plans are hypotheses, not contracts. The team builds the plan based on assumptions about how the change will be received, how quickly people will adopt, where resistance will emerge, and what support will be needed. Some of those assumptions will be wrong. The learning agenda creates the mechanism for discovering which assumptions were wrong and adjusting the plan before the next wave.

From Vague Objectives to Specific Hypotheses

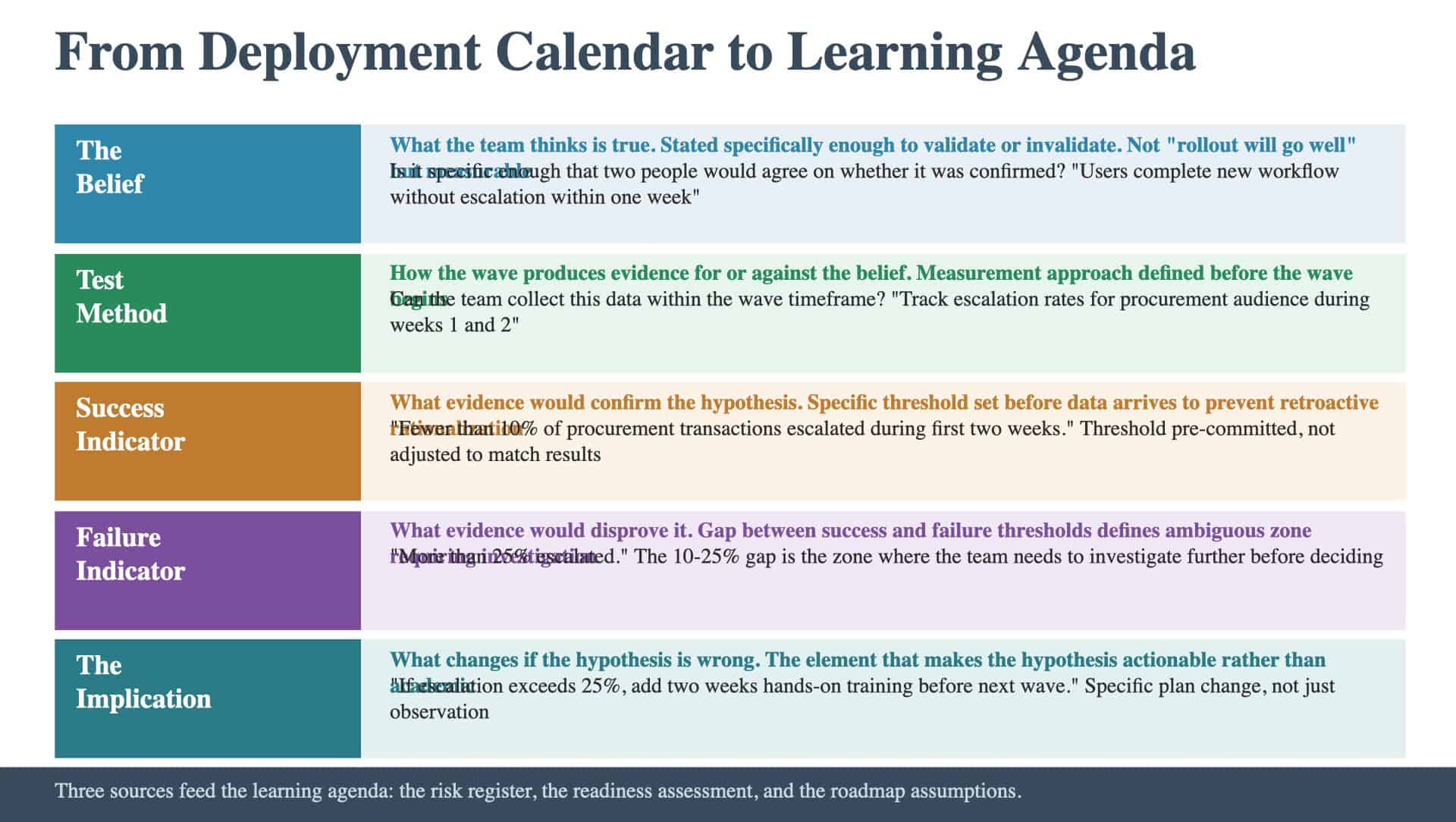

The most common failure in learning agendas is vagueness. “Learn how the rollout works” is an objective, not a hypothesis. “Understand adoption patterns” is an aspiration, not a testable proposition. A hypothesis has five elements: The belief. What the team thinks is true. Stated specifically enough to be validated or invalidated. “Users will be able to complete the new procurement workflow without escalation within the first week” is a belief. “The rollout will go well” is not. The test method. How the wave will produce evidence for or against the belief. “Track escalation rates for the procurement audience during weeks 1 and 2 of the wave” is a test method. The success indicator. What evidence would confirm the hypothesis. “Fewer than 10 percent of procurement transactions escalated during the first two weeks” is a success indicator. The failure indicator. What evidence would disprove it. “More than 25 percent of procurement transactions escalated” is a failure indicator. The gap between success and failure thresholds (10 to 25 percent in this example) defines the ambiguous zone where the team needs to investigate further. The implication. What changes if the hypothesis is wrong. “If escalation rates exceed 25 percent, add two weeks of hands-on training before the next wave’s procurement audience deploys” is an implication. This is what makes the hypothesis actionable rather than academic.

Where Hypotheses Come From

The learning agenda doesn’t require inventing hypotheses from scratch. They come from three sources already in the planning artifacts. The risk register. Risks identified during the pre-mortem are natural hypotheses. If the risk register flagged “users may struggle with the new interface,” the learning agenda includes a hypothesis about interface usability. If the register flagged “the integration between System A and System B may fail under load,” the pilot includes a load test with a specific threshold. The change plan readiness assessment. Readiness gaps are hypotheses about adoption. If the readiness assessment identified a capability gap in a specific audience, the learning agenda tests whether the training plan closes that gap. If the assessment identified a willingness gap, the learning agenda tests whether the communication approach is shifting attitudes. The roadmap assumptions. The integrated roadmap was built on assumptions about timeline, resource availability, and dependency sequencing. The learning agenda tests whether those assumptions hold in practice. If the roadmap assumed a two-week training cycle, the pilot tests whether two weeks is sufficient. The connection between these sources and the learning agenda is direct. Each high-priority risk becomes a hypothesis. Each readiness gap becomes a hypothesis. Each critical roadmap assumption becomes a hypothesis. The learning agenda is the operational expression of the program’s risk and readiness analysis.

The Learning Loop Between Waves

The learning agenda creates value only if there’s a feedback loop between waves. This requires two things: Time. There must be sufficient time between waves to analyze the data, draw conclusions, and adjust the plan. If Wave 2 starts before Wave 1 data is reviewed, the learning agenda is decorative. The wave dependencies should include an explicit checkpoint for learning agenda review. A review mechanism with authority to adjust. Someone must be responsible for consolidating the data, evaluating each hypothesis, and proposing plan adjustments. This isn’t a standing meeting that reviews status. It’s a working session that evaluates evidence against hypotheses and decides what changes. Critically, the review must have the authority to change the plan for subsequent waves. If the data shows the training approach isn’t working, the team needs to redesign training before the next wave. Without adjustment authority, the learning agenda produces observations but not improvements.

The Compound Effect

The value of a learning agenda compounds across waves. Wave 1 produces five data points that reshape the Wave 2 plan. Wave 2 produces five more that reshape Wave 3. By Wave 4, the team is operating on evidence accumulated across three prior waves. Programs without learning agendas don’t get this compound effect. Each wave is planned from the same original assumptions. Problems repeat. The team discovers at the end of the rollout that it knew enough after Wave 1 to prevent most of the problems in Waves 2 through 4, but the knowledge was never captured and applied. The learning agenda is what makes a wave-based rollout genuinely different from a big-bang launch. Without it, waves are just a big bang in slow motion: the same plan deployed at different times without adaptation. The question is whether each wave incorporates everything the team learned from the ones before it: or whether it deploys the same plan at different times and calls it phased.

Keep Reading

New to the Rollout Plan? Start with the foundations:

Ready to benchmark your work against best-in-class? See what excellence looks like: