A Launch Date Is Not a Rollout Plan

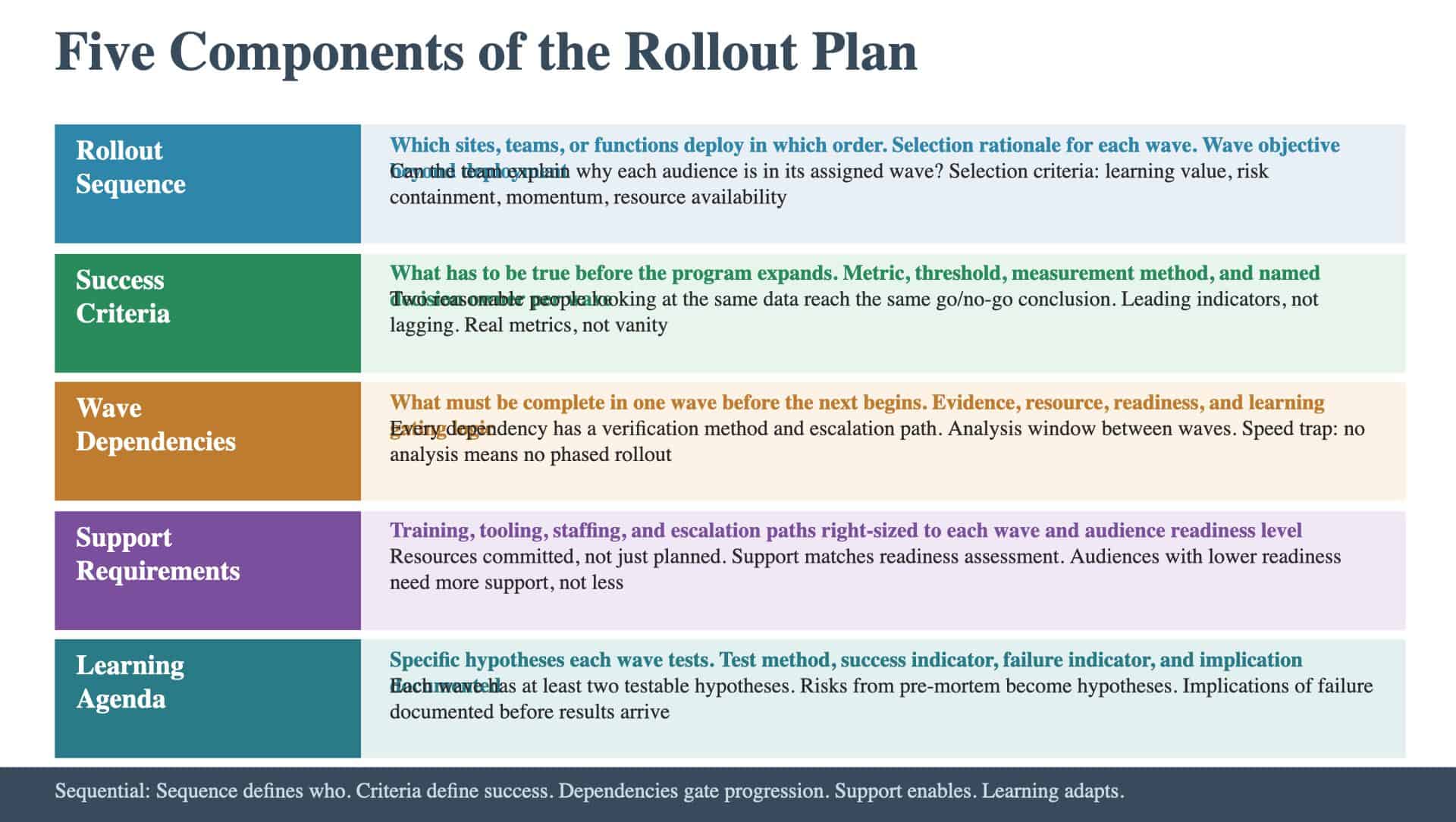

Most programs have a launch date. Many have a rough sequence: pilot first, then expand. Few have the five components that turn a deployment calendar into an artifact the team can actually execute against. The difference matters because a launch date tells the organization when something happens. A Rollout Plan tells the organization how it happens, what has to be true before it expands, what the team will learn along the way, and what support will be in place at each stage. Here is what each component contains and what separates a useful version from a placeholder.

Component 1: The Rollout Sequence

The Rollout Sequence defines which sites, teams, or functions deploy in which order, and why the order was chosen. The sequence captures for each wave:

- Wave identifier (Pilot, Wave 1, Wave 2, etc.)

- Included audiences (specific sites, teams, or functions in this wave)

- Selection rationale (why these audiences are in this wave)

- Timing (target start and end dates)

- Wave objective (what this wave is designed to accomplish beyond deployment)

The selection rationale is the most important field and the one most often left blank. There should be an intentional reason why certain audiences are in early waves and others are in later ones. Common selection logic includes:

- Learning value. Start with environments complex enough to identify real issues. The pilot should stress-test, not validate.

- Risk management. Avoid starting with the most critical operations. If the pilot fails, the consequences should be containable.

- Momentum building. Early waves that succeed create proof points for later audiences. Selecting an audience likely to adopt well can build organizational confidence.

- Resource availability. Match wave timing to when training and support resources are available.

The quality bar: the team can explain why each audience is in its assigned wave and what would change if the sequence were different.

Component 2: Success Criteria

Success Criteria define what has to be true before the program expands from one wave to the next. They make go/no-go decisions explicit rather than political. For each wave, the criteria include:

- Metric (what’s measured)

- Threshold (what value constitutes success)

- Measurement method (how the data is collected)

- Decision owner (who makes the go/no-go call)

The distinction between leading and lagging metrics matters here. Lagging metrics like revenue impact or full adoption take too long to materialize. The team needs leading indicators that tell them early whether things are working: adoption rates in the first two weeks, error rates during the transition period, user feedback scores, process compliance rates. The vanity-metrics trap is defining criteria that are easy to hit but don’t indicate real adoption. “Training completed” is a vanity metric because it measures attendance, not capability. “Users completing the new process without escalation within the first week” measures actual adoption. The quality bar: the criteria are specific enough that two reasonable people looking at the same data would reach the same go/no-go conclusion.

Component 3: Wave Dependencies

Wave Dependencies map what has to be complete in one wave before the next can begin. They create the gating discipline that prevents premature expansion. The dependency map captures:

- Dependency (what must be true)

- Source wave (which wave produces this condition)

- Target wave (which wave requires this condition)

- Verification method (how the team confirms the dependency is met)

- Escalation path (what happens if the dependency isn’t met)

The escalation path is critical and often missing. If a wave doesn’t meet its success criteria, who makes the call? What are the options: delay the next wave, proceed with modifications, scale back, or pause entirely? These decisions should be designed in advance, not improvised under pressure. The speed trap is compressing waves so tightly that there’s no time to learn between them. If Wave 2 starts before Wave 1 data is analyzed, the team isn’t doing a phased rollout. They’re doing a simultaneous launch with extra administrative overhead. The dependency map should include explicit time for data analysis and plan adjustment between waves. The quality bar: the team can trace every wave dependency, identify what would block progression, and describe the decision process for when a dependency isn’t met.

Component 4: Support Requirements

Support Requirements define what resources each wave needs to succeed: training, tooling, staffing, and escalation paths. For each wave, the requirements include:

- Training needs (what audiences need to learn, when, and how)

- Tooling requirements (what systems, access, and configurations must be in place)

- Staffing (how many support personnel are needed and what roles they fill)

- Escalation paths (who handles issues that front-line support can’t resolve)

The support-cliff trap is providing intensive support during the pilot and then withdrawing it for later waves. The assumption is that the team has learned everything during the pilot and later waves can run lean. In practice, later waves often include audiences with lower change readiness who need more support, not less. The readiness assessment from the Change Plan should inform support allocation: audiences with lower readiness scores need higher support levels. Support requirements should also account for the learning curve. Early waves typically need more support per user because the support team itself is still learning. Later waves benefit from that experience but may face different challenges. The plan should reflect this trajectory rather than applying a uniform support model. The quality bar: for each wave, the team can confirm that resources are committed (not just planned) and that the support level matches the audience’s readiness.

Component 5: The Learning Agenda

The Learning Agenda lists the specific questions each wave is designed to answer. It turns the rollout from a deployment exercise into a discovery process. For each wave, the agenda captures:

- Hypothesis (what the team believes is true)

- Test method (how the wave will validate or invalidate the hypothesis)

- Success indicator (what evidence would confirm the hypothesis)

- Failure indicator (what evidence would disprove it)

- Implication (what changes if the hypothesis is wrong)

The specificity of the hypotheses determines the value of the learning agenda. “Learn how it works” is too vague. “Validate that users can complete the new workflow in under five minutes without escalation” is a hypothesis. “Determine whether the new approval process reduces cycle time by at least 20 percent” is a hypothesis. Each should be testable within the wave’s timeframe. The learning agenda connects the Rollout Plan back to the risk landscape. Risks identified during the pre-mortem become hypotheses in the learning agenda. If the risk register flagged “users may struggle with the new interface,” the learning agenda includes a hypothesis about interface usability with a specific test method and threshold. The quality bar: each wave has at least two specific hypotheses with defined test methods, and the implications of failure are documented.

How the Five Components Work Together

The components are sequential. The Rollout Sequence defines who deploys when. Success Criteria define what “worked” means. Wave Dependencies define what gates progression. Support Requirements define what resources are needed. The Learning Agenda defines what the team wants to discover. Together, they create a deployment approach that’s evidence-based rather than calendar-based. The question is whether the team advances because the data supports expansion: or whether it advances because the date arrived and nobody built the framework to say otherwise.

Keep Reading

Ready to close specific gaps in your Rollout Plan? These articles show you how: