The Readiness Report That Said Everything Was on Track

The program team presents its readiness assessment. The slide shows green across the board. Leadership is aligned. Communication is in progress. Training is planned. The assessment concludes that the organization is ready for the change. Four weeks into execution, adoption stalls. Two regional teams push back on timing. The frontline managers in one division haven’t received any training because their calendar is consumed by a parallel initiative. A key audience group, the procurement team whose process changes fundamentally, wasn’t included in the assessment at all. The readiness assessment didn’t fail because the team was dishonest. It failed because it assessed the wrong things. It measured awareness (“Do people know this is coming?”) and leadership alignment (“Are the executives on board?”) but missed the dimensions that actually predict adoption.

The Five Dimensions That Actually Predict Adoption

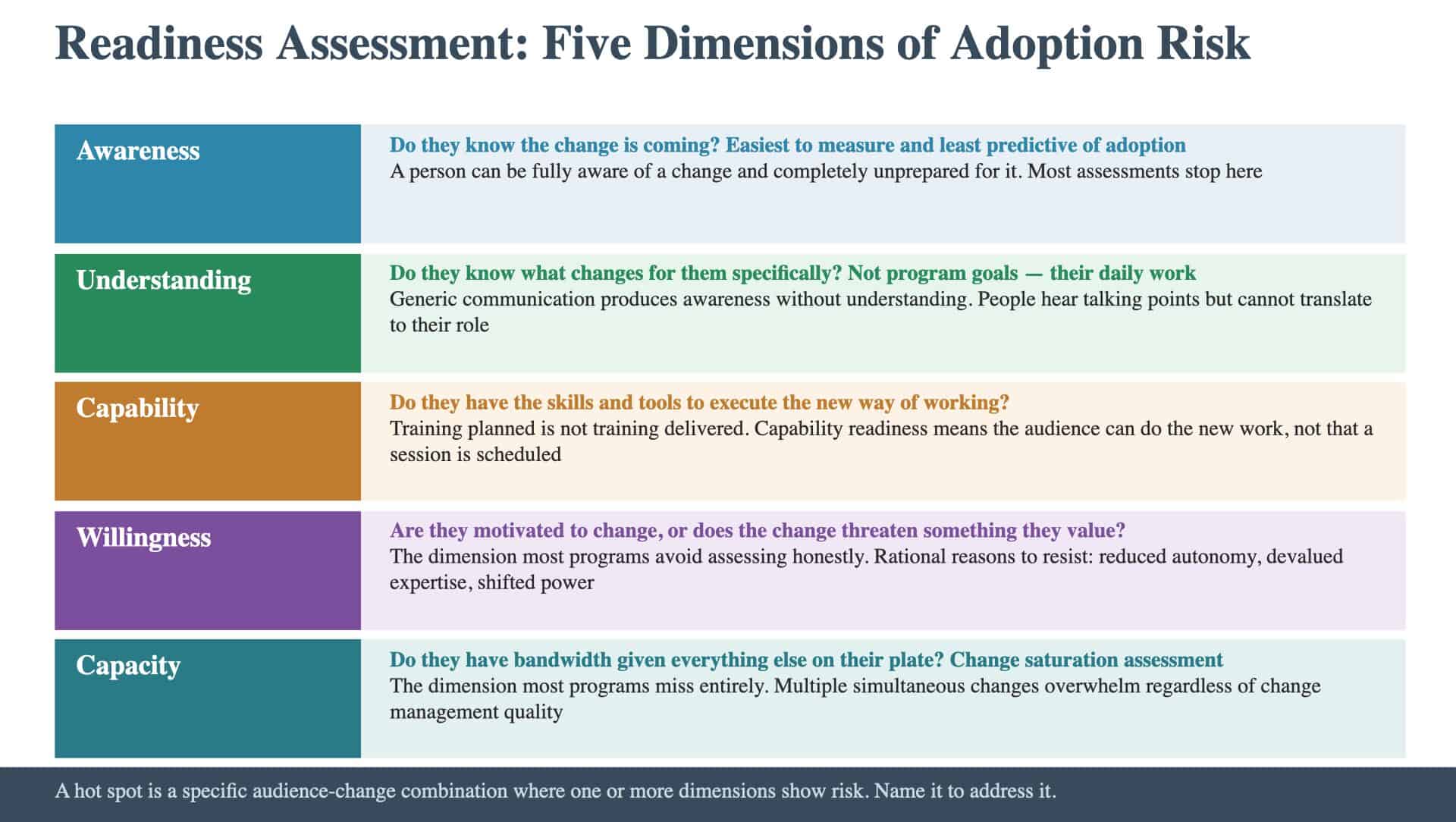

Readiness is not a single variable. It’s the composite of five dimensions, and most assessments measure only two of them. Awareness. Do they know the change is coming? This is the dimension most programs assess. It’s the easiest to measure and the least predictive of adoption. A person can be fully aware of a change and completely unprepared for it. Understanding. Do they know what’s changing for them specifically? Not the program’s goals or the executive sponsor’s vision, but the concrete differences in their daily work. This is where generic communication fails. People hear the program’s talking points but can’t translate them into what it means for their role. Capability. Do they have the skills and tools to execute the new way of working? Training plans are common. Training that’s been delivered, absorbed, and practiced before the change goes live is rare. Capability readiness means the audience can actually do the new work, not that someone has scheduled a session to teach them. Willingness. Are they motivated to change, or does the change threaten something they value? Willingness is the dimension most programs avoid assessing honestly. It requires acknowledging that some people have rational reasons to resist. The change might reduce their autonomy or shift power away from their function. These aren’t irrational responses. They’re predictable reactions that the readiness assessment should surface, not ignore. The Resistance Analysis provides the structured framework for cataloging these willingness gaps by audience, turning political intuition into documented planning inputs. Capacity. Do they have bandwidth for this change given everything else on their plate? This is the dimension most programs miss entirely. Capacity is about the broader change landscape: what else is hitting this audience, how much attention they have available, and whether this initiative is competing for the same cognitive and operational bandwidth as three other priorities.

Why Capacity Is the Dimension That Breaks Everything

Organizations have a limited capacity for change. This is not a metaphor. It is an operational constraint with measurable consequences. When a program lands on top of three other major changes, adoption suffers regardless of how good the change management is. People are not resisting the change. They are overwhelmed by the cumulative burden of too many changes competing for the same attention and energy. Change saturation is the most common source of resistance that “comes out of nowhere.” Leadership is aligned. The program has strong governance. And then during rollout, a division pushes back. The program team interprets this as resistance. In reality, it’s a capacity problem. The division is in the middle of a reorganization, a system migration, and a budget cycle. This initiative, regardless of its merit, arrived at a moment when the organization’s change capacity was already consumed. A readiness assessment that evaluates capacity asks: What else is hitting these audiences? How many other changes are they absorbing? What’s their bandwidth? If the answer is “very little,” the team can adjust timing or make the case for deprioritizing competing initiatives. Without that assessment, the team discovers saturation during execution, when the options are far more limited.

What Makes a Hot Spot a Hot Spot

A hot spot is a specific audience-change combination where adoption is at risk. It’s not a general concern about change management. It’s a named population facing a named change with a specific readiness gap. Hot spots emerge from the intersection of the five dimensions. An audience that scores high on awareness but low on capability is a hot spot: they know what’s coming but can’t do it. An audience that scores high on capability but low on willingness is a different kind of hot spot: they can do it but don’t want to. An audience that scores low on capacity is a hot spot regardless of the other dimensions: they might want to change and know how, but they don’t have the bandwidth. The value of naming hot spots is that it converts an abstract readiness report into an actionable intervention plan. For each hot spot, the team can ask: what specific action closes this gap? A capability gap requires training; a willingness gap requires a different messenger. A capacity gap requires sequencing changes or reducing competing demands.

The Honesty Problem

The biggest obstacle to useful readiness assessment is not methodology. It is honesty. Readiness assessments that report to leadership have an inherent pressure to show green. Nobody wants to be the person who says the organization isn’t ready, especially if that message delays the program or questions leadership’s commitment. The result is assessments that acknowledge minor concerns while declaring overall readiness, even when the data says otherwise. This is the checkbox trap applied to readiness. The assessment exists as an artifact. It says the right things in the right format. But it doesn’t reveal the real risks because identifying them would be uncomfortable. The antidote is specificity. An assessment that names specific hot spots with specific evidence is harder to dismiss than one that reports general readiness levels. “The procurement team has had zero training hours on the new process and is simultaneously managing a vendor consolidation” is a hot spot with evidence. “Some audiences may need additional support” is a finding that allows everyone to nod and move on.

How to Move from What You Have to What You Need

Programs at this stage typically have some form of readiness tracking. The gap is usually in two areas: the dimensions being assessed and the honesty of the assessment.

- Check the dimensions. If the assessment covers awareness and leadership alignment but not capability, willingness, or capacity, it’s measuring the least predictive factors. Add the missing dimensions for each priority audience from the audience map.

- Check the specificity. Replace green-yellow-red ratings with named hot spots. For each audience-change combination where the team sees risk, document what the gap is, why it exists, and what intervention would close it.

- Check for capacity. Map the other changes hitting each priority audience. If any audience is absorbing multiple significant changes simultaneously, flag it as a capacity hot spot regardless of their scores on the other dimensions.

The question is whether the team identifies the hot spots before execution starts: or whether it discovers them when resistance emerges and the window for prevention has already closed.

Keep Reading

New to the Change Plan? Start with the foundations:

Ready to benchmark your work against best-in-class? See what excellence looks like: