Having an Integrated Roadmap is not the same as having one that is execution-grade. Most programs produce some version of a roadmap during planning. Workstreams are listed. Milestones exist. Someone built a Gantt chart. The question is whether the artifact meets the quality bar required for it to do its job: giving the program team a realistic, stress-testable plan that shows how all the pieces connect and what sequence determines the end date. This article defines the quality benchmark across all five components of the Integrated Roadmap. It is written for leaders whose programs already have a roadmap and who want to evaluate whether that roadmap is execution-grade.

Component 1: Workstream Definitions

What execution-grade looks like

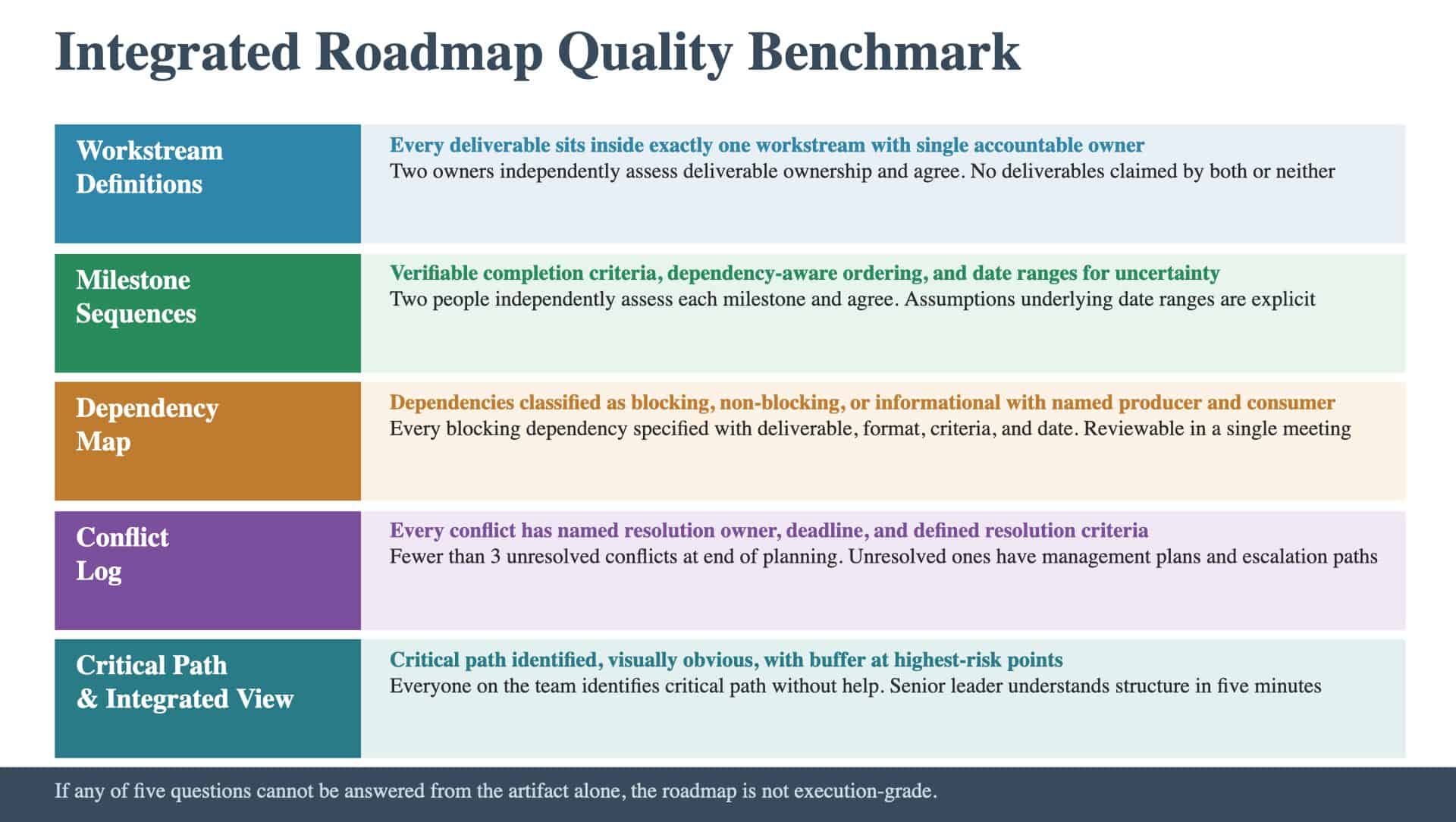

Workstreams have clear scope boundaries. Every deliverable in the program sits inside exactly one workstream. Each workstream has a single accountable owner. The boundaries between adjacent workstreams are explicit: where one ends and the next begins is unambiguous.

The test

- Can two workstream owners independently assess whether a given deliverable belongs to them and agree on the answer?

- Does every workstream have a single named owner (an individual, not a team)?

- Are the handoff points between adjacent workstreams explicitly documented?

- Are there any deliverables that sit between workstreams, claimed by neither or both?

The architecture nobody builds describes why workstream definitions are architectural decisions. If the boundaries are unclear, the roadmap will have integration gaps that surface during execution.

Component 2: Milestone Sequences

What execution-grade looks like

Each workstream has a sequence of milestones with verifiable completion criteria. “Complete design phase” is not verifiable; “Design spec signed off by Product and Engineering leads” is. Where uncertainty exists, the sequence uses date ranges rather than false-precision dates, with explicit assumptions about what would need to be true for the earlier date to hold. The milestones are dependency-aware: each milestone knows what it depends on upstream and what depends on it downstream. The ordering is logical (driven by dependencies), not just chronological (driven by calendar preference).

The test

- Could two people independently assess whether each milestone is met and agree?

- Are the completion criteria specific enough to create accountability?

- Where date ranges exist, are the assumptions underlying the range explicit?

- Does each milestone identify its upstream dependencies and downstream consumers?

The roadmap that tells you nothing describes roadmaps where milestones are vague enough that “on track” and “behind” are indistinguishable. Verifiable milestones are the foundation of honest status reporting.

Component 3: The Dependency Map

What execution-grade looks like

Cross-workstream dependencies are mapped, classified, and owned. Each dependency specifies who depends on whom, for what specific deliverable, in what format, by when. Dependencies are classified as blocking (work cannot proceed), non-blocking (work could proceed but would benefit), or informational (awareness needed). Each dependency has a named producer and a named consumer. The map focuses on the dependencies that matter: blocking dependencies, high-risk dependencies, and dependencies on the critical path. The map is manageable: fifteen to twenty-five key dependencies, not two hundred.

The test

- Is every blocking dependency specified with deliverable, format, criteria, and date?

- Does every dependency have a named producer and named consumer (individuals, not teams)?

- Is the distinction between blocking, non-blocking, and informational clear?

- Can the program director review the entire dependency map in a single meeting?

The complete deliverable map describes the upstream work that feeds dependency mapping. If the deliverable inventory is incomplete, the dependency map has gaps.

Component 4: The Conflict Log

What execution-grade looks like

Every identified conflict (resource, timing, scope, assumption) has a named resolution owner, a resolution deadline, and defined resolution criteria. The log is actively managed: conflicts are tracked to resolution through a defined cadence. At the end of roadmap sessions, the log contains fewer than three unresolved conflicts, each with a documented management plan.

The test

- Does every conflict have a named individual resolution owner?

- Does every conflict have a resolution deadline based on when it would affect execution?

- Can the team objectively assess whether each conflict is resolved or still open?

- Are there fewer than three unresolved conflicts at the end of the planning phase?

- Do unresolved conflicts have documented management plans and escalation paths?

Programs that failed with good plans documents what happens when conflicts are identified during planning and left unresolved: they reappear during execution as crises, with higher resolution costs and fewer options.

Component 5: The Critical Path and Integrated View

What execution-grade looks like

The critical path is identified and visually obvious in the integrated view. Everyone on the program team can trace the chain of blocking dependencies that determines the end date. Near-critical paths (chains close to the critical path in duration) are identified. Buffer exists at the highest-risk points along the critical path. The integrated view is readable: a senior leader can understand the program’s structure, timeline, key dependencies, and critical path within five minutes. The visualization shows all workstreams, their milestone sequences, cross-workstream dependencies, and the critical path as a first-class element.

The test

- Can everyone on the program team identify the critical path without help?

- Are near-critical paths identified and monitored?

- Does buffer exist at the highest-risk points on the critical path?

- Can a senior leader understand the program’s structure and critical path within five minutes of reviewing the integrated view?

- Does the visualization show dependencies, not just timelines?

The roadmap that tells you nothing contrasts execution-grade integrated views with the common alternative: timelines without dependencies, where dates float without connection to what drives them.

The Integrated Quality Bar

The Integrated Roadmap passes the overall quality benchmark when all five components meet their individual tests and the artifact as a whole meets one additional standard: the program team, reading only the Integrated Roadmap, can determine what work happens in what order, what depends on what, where the highest risks to the timeline sit, and what the realistic end date is given known constraints. Specifically, the program team should be able to answer five questions from the artifact alone:

- What are the workstreams, who owns each, and where are the boundaries?

- What are the key milestones, and how will the team know when each is met?

- Which dependencies are blocking, and what is the management protocol for each?

- What conflicts exist, who owns them, and when will they be resolved?

- What is the critical path, and what happens to the end date if a critical-path activity slips?

If any of these questions cannot be answered from the artifact, the roadmap is not execution-grade.

The Iteration Test

One additional quality indicator distinguishes execution-grade roadmaps from first-draft roadmaps: evidence of iteration. The first version of any roadmap will be wrong. The question is whether the team iterated: built the roadmap, stress-tested it against dependencies and constraints, revised it, and stress-tested again. Evidence of iteration includes: milestone dates that moved from earlier drafts (indicating that dependency analysis changed the timeline), conflicts that were surfaced and resolved (indicating that the integration exercise produced discoveries), and buffer built into the critical path (indicating that the team identified risk and designed around it). A roadmap that did not change through the planning process was not stress-tested. The team either did not push hard enough on dependencies, or they treated the roadmap as a documentation exercise rather than a planning exercise. The one-pass trap is the most common quality failure at this level. The roadmap was built once and treated as complete. Execution-grade roadmaps are built, broken, and rebuilt until they hold up under scrutiny. The question is whether your roadmap has been built, broken, and rebuilt until it holds up under scrutiny: or whether its first stress test will come during execution when the cost of adjustment is highest.

Keep Reading

Explore the foundations and common gaps: